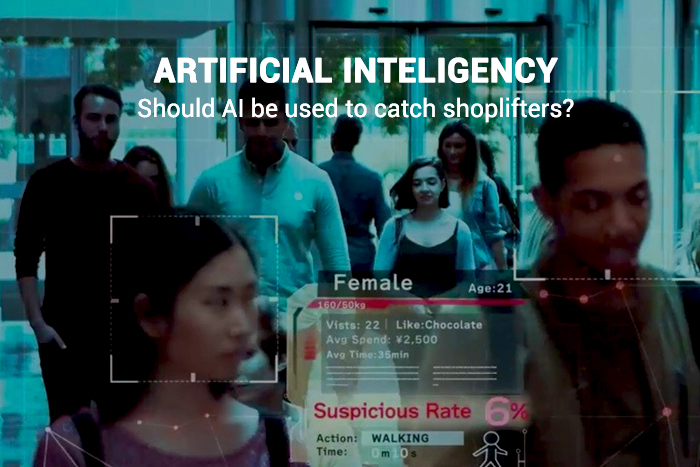

In Japan, the latest software based on Artificial Intelligence used to observe the body language of shoppers and reflects the signs if shoppers are making a plan to shoplift. This particular AI software named with VaakEye and Tokyo startup is its developers. It is different from other same products that perform after matching the faces to the criminal records. However, VaakEye works on a person’s behavior to predict any type of illegal activity.

Ryo Tanaka, the founder of Tokyo startup, expressed that his work team served the algorithm 100,000 hours cost of surveillance statistics to train it to observe everything from the shopper’s expressions of face to their actions and clothing. VaakEye launched in the month of April, and its level of popularity is that it is now using in more than 50 stores of Japan.

Vaak claims that losses from shoplifting dropped about 77 percent during the testing period in domestic convenience stores. According to the statistics of ‘Global Shrink Index,’ the shoplifting global retail cost was about $34 billion in 2017, and it will now reduce after the use of VaakEye in the stores.

Few Moral Queries Regarding the use of VaakEye

There arise various ethical questions to catch the thieves using the technology of Artificial Intelligence. Michelle Grant, the Euromonitor retail analyst, said that as the purpose is to prevent theft but either is it lawful or even moral to stop anyone from entering the store based on this software?

Ryo Tanaka responded that it should not be on the developer of the software. What they provide is the statistics of the detected image of suspicious. They cannot decide who is a criminal or has an illegal type of mind, and it is up to the owner of the shop to determine who is criminal.

Thus far that is undoubtedly what concerns the charity Liberty human rights, and that is campaigning to ban the technology of facial recognition in the United Kingdom. Policy officer and Liberty’s advocacy, Hannah Couchman said that a retail environment, a single body is starting to do something similar to a police function. Moreover, Liberty also upset regarding the potential of Artificial Intelligence to fuel discrimination.

Read Also: American President Shows Personal Interest towards Artificial Intelligence by Signing Executive Order

In 2018, Stanford University and MIT gave a study that different types of commercial facial-analysis programs established skin-type and gender biases. Furthermore, Tanaka gives details that their AI based Vaak relies on behavior instead of race or gender; that’s why it should not be an issue.